Visual clues aid autonomous medical robot in journey through human body

Researchers in the Paul M. Rady Department of Mechanical Engineering are developing a robot that may one day change how millions of people across the U.S. get colonoscopies, making these common procedures easier for patients and more efficient for doctors.

Micah Prendergast is the first author on a new paper published in IEEE Xplore that details an autonomous navigation strategy for these tiny machines as they traverse the intestines. Making these devices more autonomous in their work would make them easier for doctors to use them.

Prendergast recently graduated with his PhD from CU Boulder in 2019 and now works as a postdoctoral researcher at Technische Universiteit Delft (TU Delft) in the Netherlands. We asked him about the findings and working with Professor Mark Rentschler in the Advanced Medical Technologies Laboratory.

Question: How would you describe the work and results of this paper? What are the applications in the real world?

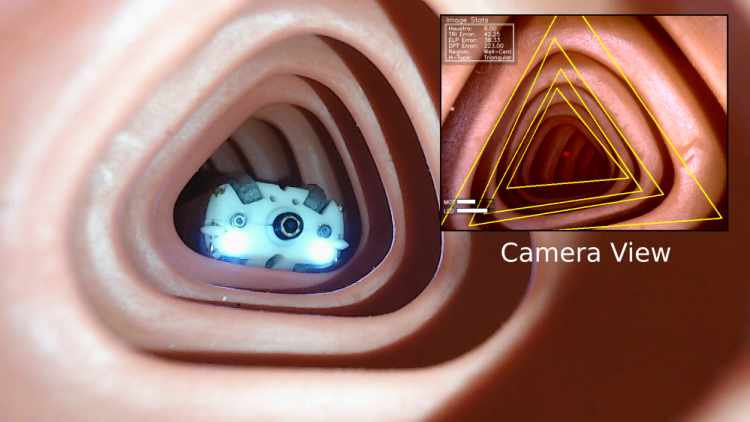

Answer: This paper is essentially presenting a method for autonomous navigation of the colon by a robotic endoscope using a single camera to sense the environment. You can think of this in simple terms as just a self-driving car that uses only a camera to provide information about the surrounding environment. We might use large visual cues such as buildings, street signs, and city parks to provide information about where we are in the world with information such as the road in front of us to tell us something about where we are able to go. The difference here is that we are in this unfamiliar world inside of the human body, so the things we might typically use to guide us are vastly different.

This paper attempts to identify some of these macro visual cues and establish straightforward geometric interpretations of them for autonomous driving and decision-making inside of the body. There is a bit more to it, though. We consider some common, specific scenarios that can cause difficulty for mobility such as sharp turns, or instances where we may not have much information at all when, for example, a camera that is blocked, but in general, the goal of this paper was just to demonstrate that we can make use of our visual intuition and basic geometry to take a real step toward autonomous navigation.

Q: Is this a research topic or area you were interested in before joining the Rentschler Lab?

A: I would not say I was interested in this particular topic, but I have always been interested in robotic perception and decision-making. This is a very interesting, applied example of that area using a somewhat strange robot in a very atypical environment. Personally, I find thinking through these novel situations and challenges interesting because it forces me to reflect on my own abilities and perceptions that I might otherwise consider to be trivial or take for granted.

In reality, many of the things humans do easily are mind-blowingly complex! So these challenges require reducing complex problems into their most meaningful and simple pieces – bringing some order to the chaos, if you will. I think this aspect can be cathartic. All that being said, sometimes this work is overwhelmingly frustrating, but I never regret doing it.

Q: Was there a particular aspect of this work that was hard to complete?

A: A large part of this work was figuring out how to describe in the simplest terms these macro features that we see in this environment. This had to be done in such a way that the robot could make sense of them from any viewing position. It took quite a lot of effort to land on a reasonable approach for completing that task. I have no doubt that improvements can be made on nearly every aspect of the current solution, but I would consider this work to be a useful intermediate step that sincerely demonstrated the utility of these methods.

Q: What research questions are still to be answered?

A: Quite a few. Working toward ways to better handle the deformation of the world – what do we do when the macro features we are relying on move or change shape all on their own? – is a very big question, for example. Another would be, how can we better work within these strange lighting conditions with highly reflective surfaces? These are big problems that, in many ways, we don’t have to consider for most other mobile robot applications. And while this paper demonstrates that we can have some success without completely addressing these problems, I think they will be important for developing a fully robust system that is truly a step up from conventional endoscopy.

Q: What is it like working and studying at CU Boulder?

A: I am proud to have been a part of the Advanced Medical Technologies Laboratory and to have worked with Professor Rentschler. I think he has done an exceptional job at bringing together a diverse group of talented students and at leveraging the many perspectives and scientific backgrounds of that diverse group to improve the quality of the work the lab produces, all while fostering the many talents of the group members themselves. The lab will always be a family to me, and I am proud to have worked with so many brilliant and wonderful people.

“A Real-Time State Dependent Region Estimator for Autonomous Endoscope Navigation” was supported in part by the National Science Foundation (Grant No. 1849357), the CU Boulder Innovative Seed Grant Program, and the Colorado Office of Economic Development and International Trade. This research was also conducted with government support under and awarded by the Department of Defense, Air Force Office of Scientific Research, and National Defense Science and Engineering Graduate (NDSEG) Fellowship, 32 CFR 168a. Other CU Boulder authors include Gregory Formosa, Mitchell Fulton and Mark Rentschler from the Department of Mechanical Engineering and Christoffer Heckman from the Department of Computer Science.