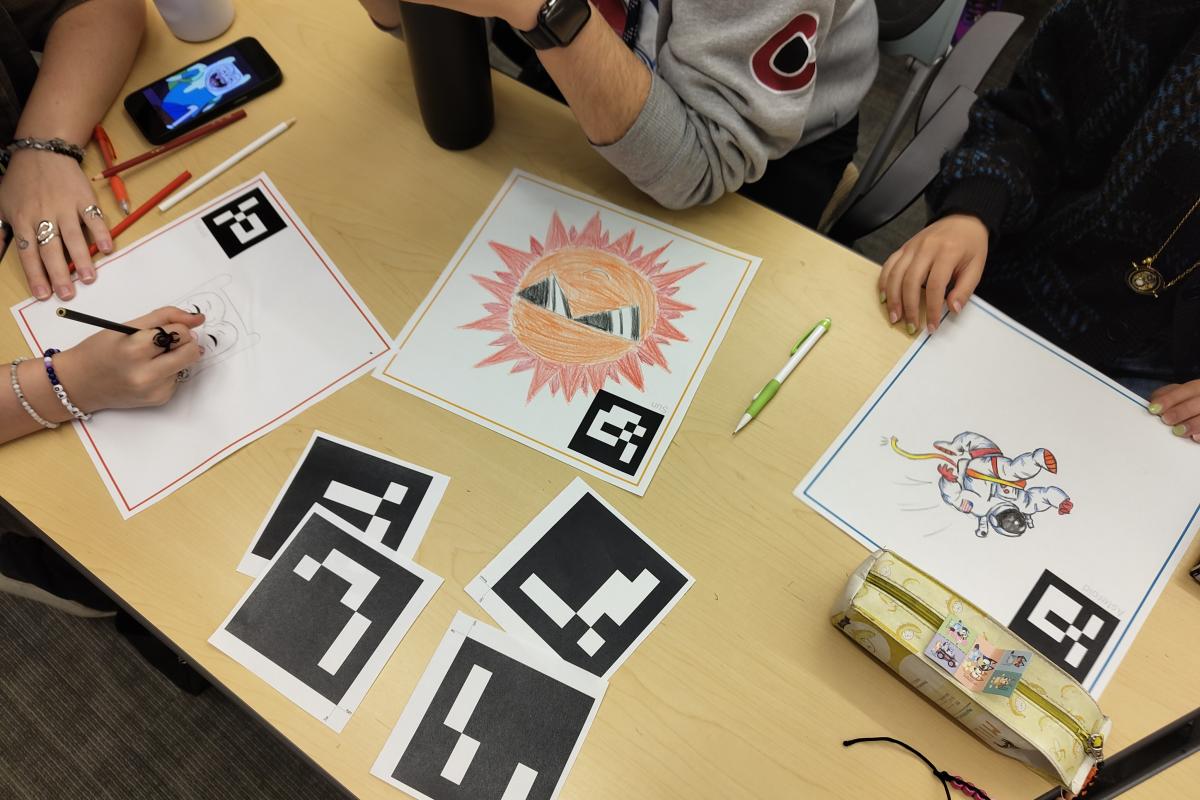

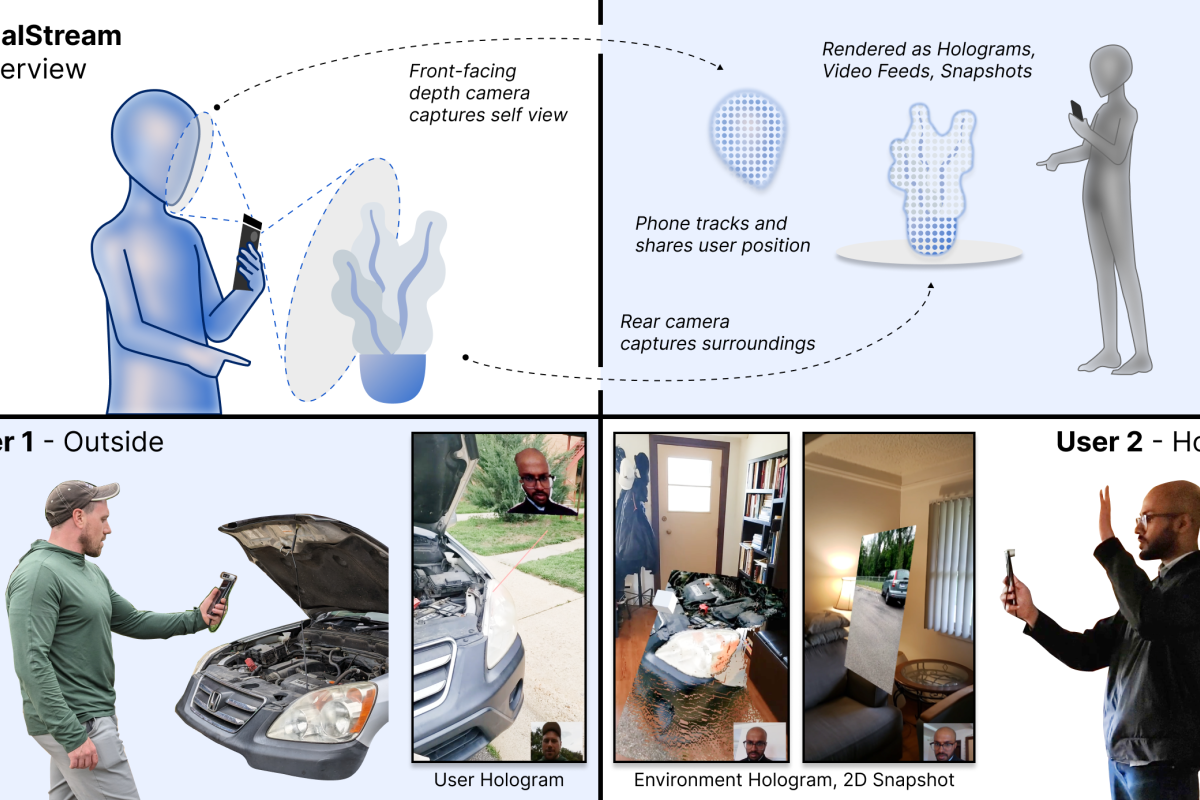

ATLAS Projects

The ATLAS Institute is home to faculty and students whose research interests transcend traditional disciplines of engineering, design, science and art. We make tangible and digital tools and methods that shape how people interact with the world—and we invite you explore current and past projects developed in our labs.