Leap in lidar could improve safety, security of new technology

Whether it’s on top of a self-driving car or embedded inside the latest gadget, Light Detection and Ranging (lidar) systems will likely play an important role in our technological future, enabling vehicles to ‘see’ in real-time, phones to map three-dimensional images and enhancing augmented reality in video games.

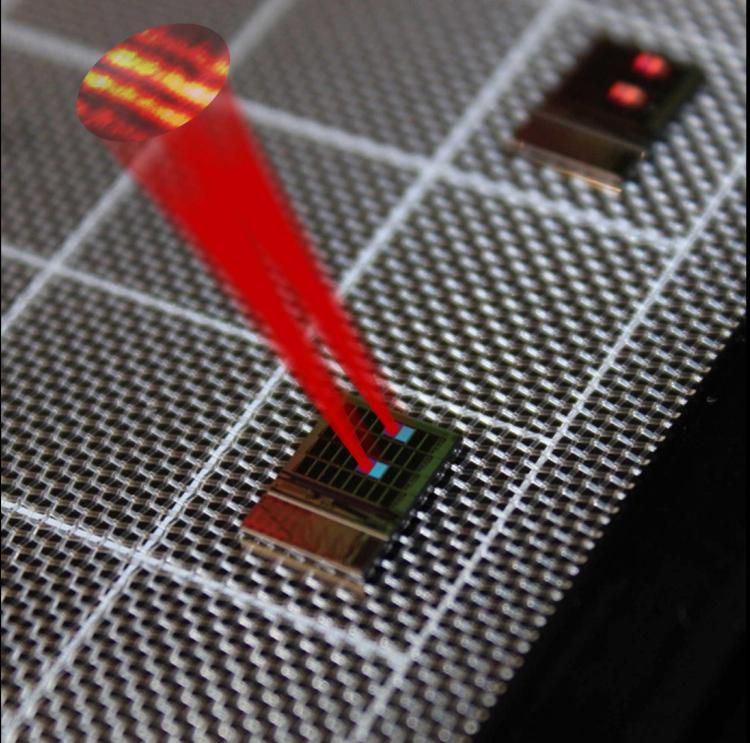

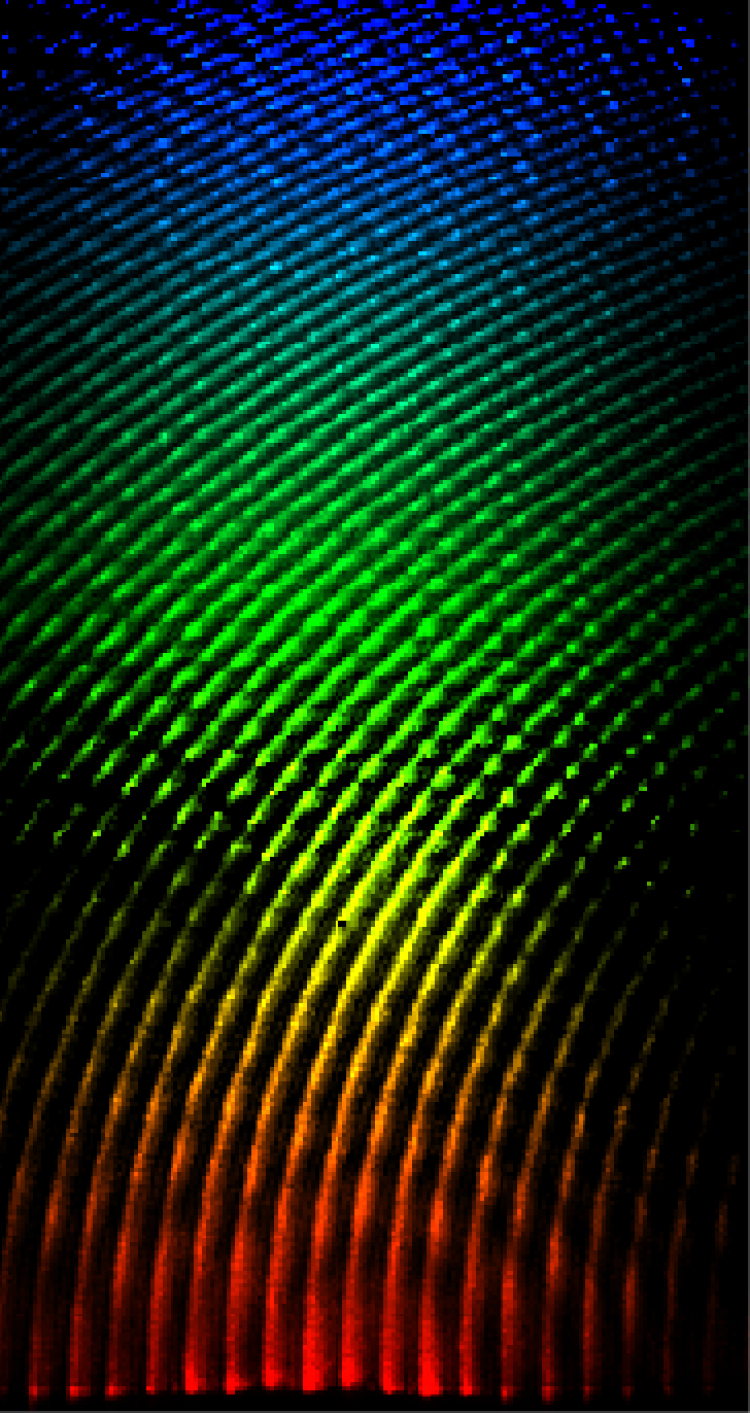

A silicon chip with a tiled array of serpentine optical phased array (SOPA) tiles. The 32 tiles in the 8-by-4 array have slightly differing grating designs, showing here two matching pairs of tiles “lighting up” at this viewing angle. Drawn superimposed are beams from two matching tiles and the far field beam interference pattern demonstrating tiled beam forming. (Credit: Bohan Zhang and Nathan Dostart)

The challenge: these 3-D imaging systems can be bulky, expensive and hard to shrink down to the size needed for these up-and-coming applications. But CU Boulder researchers are one big step closer to a solution.

In a new paper, published in Optica, they describe a new silicon chip—with no moving parts or electronics—that improves the resolution and scanning speed needed for a lidar system.

“We're looking to ideally replace big, bulky, heavy lidar systems with just this flat, little chip,” said Nathan Dostart, lead author on the study, who recently completed his doctorate in the Department of Electrical and Computer Engineering.

Current commercial lidar systems use large, rotating mirrors to steer the laser beam and thereby create a 3-D image. For the past three years, Dostart and his colleagues have been working on a new way of steering laser beams called wavelength steering—where each wavelength, or “color,” of the laser is pointed to a unique angle.

They’ve not only developed a way to do a version of this along two dimensions simultaneously, instead of only one, they’ve done it with color, using a “rainbow” pattern to take 3-D images. Since the beams are easily controlled by simply changing colors, multiple phased arrays can be controlled simultaneously to create a bigger aperture and a higher resolution image.

“We've figured out how to put this two-dimensional rainbow into a little teeny chip,” said Kelvin Wagner, co-author of the new study and professor of electrical and computer engineering.

The end of electrical communication

Autonomous vehicles are currently a $50 billion dollar industry, projected to be worth more than $500 billion by 2026. While many cars on the road today already have some elements of autonomous assistance, such as enhanced cruise control and automatic lane-centering, the real race is to create a car that drives itself with no input or responsibility from a human driver. In the past 15 years or so, innovators have realized that in order to do this cars will need more than just cameras and radar—they will need lidar.

Lidar is a remote sensing method that uses laser beams, pulses of invisible light, to measure distances. These beams of light bounce off everything in their path, and a sensor collects these reflections to create a precise, three-dimensional picture of the surrounding environment in real time.

Lidar is like echolocation with light: it can tell you how far away each pixel in an image is. It’s been used for at least 50 years in satellites and airplanes, to conduct atmospheric sensing and measure the depth of bodies of water and heights of terrain.

While great strides have been made in the size of lidar systems, they remain the most expensive part of self-driving cars by far—as much as $70,000 each.

In order to work broadly in the consumer market one day, lidar must become even cheaper, smaller and less complex. Some companies are trying to accomplish this feat using silicon photonics: An emerging area in electrical engineering that uses silicon chips, which can process light.

The research team’s new finding is an important advancement in silicon chip technology for use in lidar systems.

“Electrical communication is at its absolute limit. Optics has to come into play and that's why all these big players are committed to making the silicon photonics technology industrially viable,” said Miloš Popović, co-author and associate professor of engineering at Boston University.

The simpler and smaller that these silicon chips can be made—while retaining high resolution and accuracy in their imaging—the more technologies they can be applied to, including self-driving cars and smartphones.

Rumor has it that the upcoming iPhone 12 will incorporate a lidar camera, like that currently in the iPad Pro. This technology could not only improve its facial recognition security, but one day assist in creating climbing route maps, measuring distances and even identifying animal tracks or plants.

“We're proposing a scalable approach to lidar using chip technology. And this is the first step, the first building block of that approach,” said Dostart, who will continue his work at NASA Langley Research Center in Virginia. “There's still a long way to go.”

Additional co-authors of this study include Michael Brand and Daniel Feldkhun of CU Boulder; Bohan Zhang, Anatol Khilo, Kenaish Al Qubaisi, Deniz Onural and Milos A. Popovic of Boston University.