HapticBots give form to virtual surfaces

When users reach out to touch a visible surface in virtual reality, it normally isn't there. But a team of researchers in ATLAS Institute's THING Lab have been exploring ways to make the virtual tangible. Building on their past work, PhD graduate Ryo Suzuki and Assistant Professor Daniel Leithinger recently published a paper that was presented for ACM's Symposium on User Interface Software & Technology, introducing an intriguing application of small swarm robots that dynamically move to provide physical touchpoints on demand whenever the user reaches out and touches a virtual point in space.

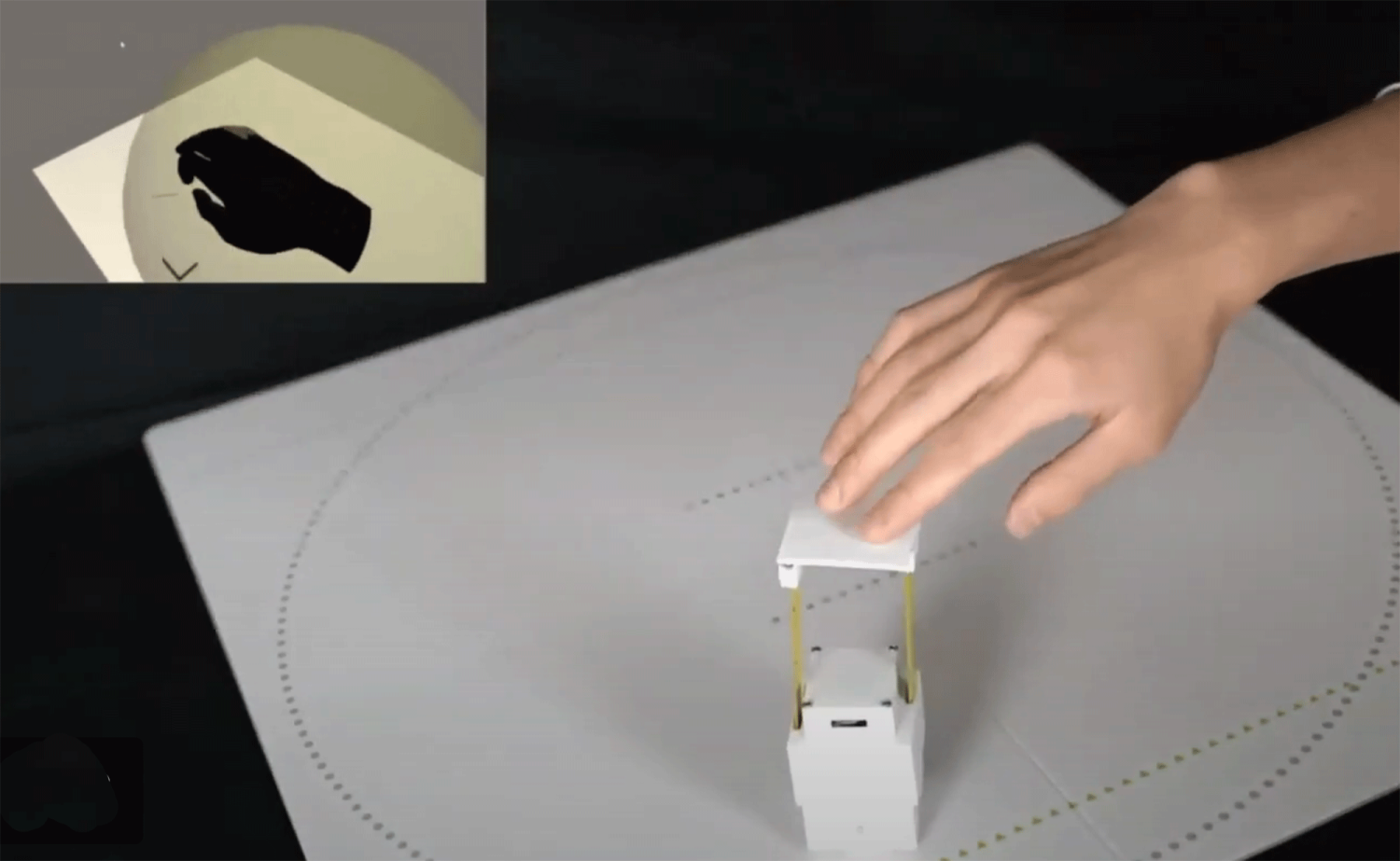

Coined HapticBots, the Rubix cube-sized robots reach the hand just in time to provide haptic feedback. While each robot can render the surface touched by a single fingertip, having a fast-moving, shape-changing swarm of them gives the illusion of a large virtual surface. Each robot controls a small piece of the surface, the size of 2–3 fingertips, equivalent to one pin of a shape display that can be moved around, rotated, raised and lowered to encounter a fingertip, as it approaches the virtual object. The small robots can be also be picked up by the user and deployed to a different surface. Possible applications for the technology include remote collaboration, education and training, design and 3D modeling, and gaming and entertainment.

Suzuki, now an assistant professor of computer science at the University of Calgary, was the lead author for the paper, collaborating with a Microsoft Research team and his former advisor, Leithinger.

[video:https://www.youtube.com/watch?v=cFq5JNtXSKo]