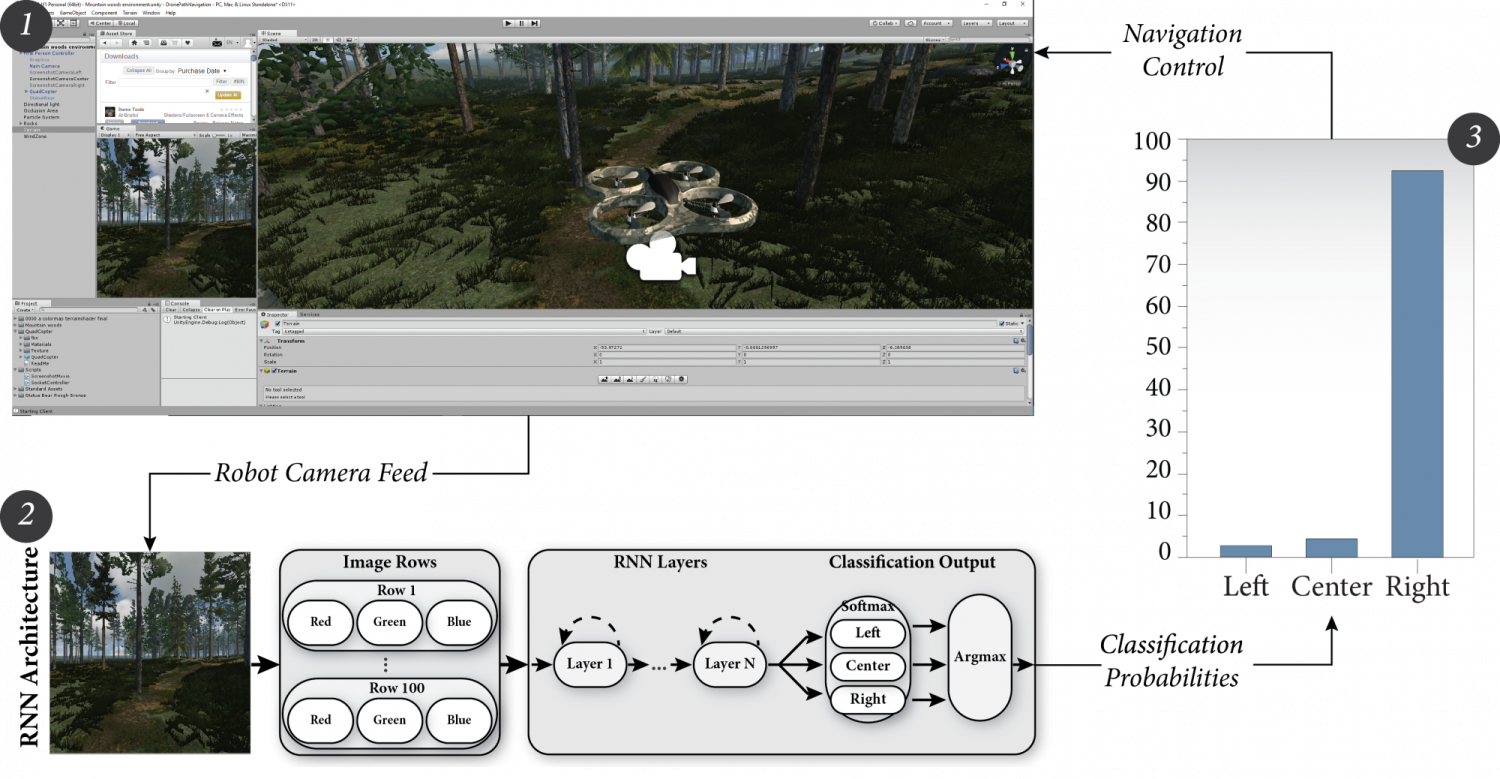

Robots hold promise in many scenarios involving outdoor use, such as search-and-rescue, wildlife management and collecting data to improve environment, climate and weather forecasting. However, autonomous navigation of outdoor trails remains a challenging problem. Recent work has leveraged the potential of deep learning approaches for trail navigation with state-of-the-art results, but the deep learning approach relies on large amounts of training data. Collecting and annotating sufficient datasets may not be feasible or practical in many situations, especially as trail conditions may change due to seasonal weather variations, storms and natural erosion. In this paper, we present an approach to address this issue through virtual-to-real-world transfer learning using a variety of deep learning models trained to classify the direction of a trail in an image. Our approach utilizes synthetic data gathered from virtual environments for model training, bypassing the need to collect copious amounts of real images of the outdoors. Methods such as these may allow for autonomous navigation model training for real-world deployment in environments lacking sufficient training data, such as space, planetary surfaces, wilderness, etc.