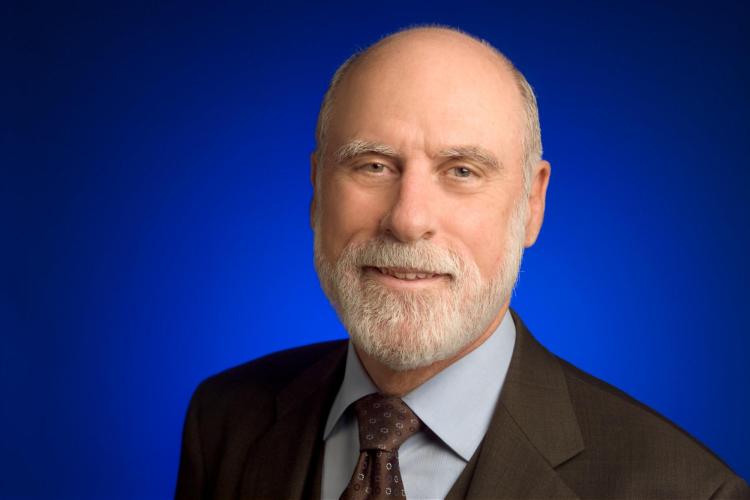

Vint Cerf, co-founder of the Internet, to speak on ‘digital preservation’

Google’s Chief Internet Evangelist to give Gamow lecture at CU Boulder Feb. 10

Having a commercial email address in the early- to mid-1990s — from America Online (AOL) or Compuserve, or perhaps Prodigy or Earthlink — marked a user as an “early adopter” of Internet technology in the United States.

But the origins of the Internet go much further back. Indeed, it was all the way back in 1973 that Vinton G. Cerf and Bob Kahn — known today as the fathers of the Internet — created the fundamental architecture, known as the TCP/IP protocol, that makes the Internet what it is today.

Vinton G. Serf had a vision in the past and has a hope for the Internet's future.

Cerf, who now serves as Google’s “Chief Internet Evangelist,” will speak on “digital preservation” at the 52nd George Gamow Memorial Lecture at 7:30 p.m. Saturday, Feb. 10 at Macky Auditorium on the University of Colorado Boulder campus. The lecture is free and open to the public.

The George Gamow Memorial lecture series honors the eminent Russian-born physicist, who joined the faculty of the CU Boulder Department of Physics in 1956. Speakers have included 26 Nobel Prize recipients and such notable figures as Jane Goodall, Robert D. Ballard and Linus Pauling.

“We are delighted to have Vint Cerf as the 52nd Gamow lecturer,” says Paul Beale, professor of physics at CU Boulder. “In 1974, Vint Cerf and Bob Kahn published the protocol that first allowed computers to exchange digital data across phone lines. Today, that same protocol is used by billions of users and devices to connect across the internet.

“Our technological society has been built on their invention. Vint has been an international leader in the development of the Internet throughout his career. He remains an innovator and visionary in the field and is a leading proponent for an open Internet that is equally accessible to all. Vint’s leadership in the field and his ability to communicate exciting ideas to the public make him an ideal Gamow lecturer.”

Preserving digital knowledge — everything from photos and video to spreadsheets, documents, databanks and games — isn’t as simple as it may sound.

“Humans produce trillions of photos and countless exabytes of complex digital objects every year,” Cerf says. “But formats are constantly changing, and all this data is potentially ephemeral. We need to find a way to store all that data in a way that will remain accessible 100 years from now.”

Humans produce trillions of photos and countless exabytes of complex digital objects every year. But formats are constantly changing, and all this data is potentially ephemeral. We need to find a way to store all that data in a way that will remain accessible 100 years from now.”

But there are numerous obstacles to preserving digital media for the long-term.

First, the media used to store digital content, whether VHS tapes, 5 ¼-inch floppy discs or CDs, are fragile and degrade over time or the readers may no longer work — even just finding a working VHS player can be difficult.

Even if you can access historical data, there’s no guarantee you can interpret it with current software, hardware or operating systems. And there are legal issues: if a company has disappeared, obtaining access to source or executable code might prove impossible. Finally, there is a question of sustainability — who is going to create and preserve such archives for 100 years, or 200 or 500?

“How many organizations and businesses last that long?” Cerf asks.

Cerf proposes what he calls “digital vellum,” a system that could “run old software on top of emulated hardware, old apps on top of old operating system hardware, possibly in the cloud.” He also would like to see laws changed to allow archives and libraries to access proprietary source code for the purposes of preservation.

As for who will do the preserving, he argues that we should study organizations that have withstood the test of time not just for centuries, but millennia, such as the Catholic Church, Islamic institutions, which played a critical role in preservation of knowledge through the Middle Ages, and universities.

“These are institutions that have lasted a long time,” he says. “We should examine what has allowed them to survive and imbue new institutions with some of those properties.”

Cerf and Kahn were working for the U.S. Defense Advanced Research Projects Agency, or DARPA, when they created the TCP/IP protocols, a two-layer system of conventions, formats and procedures that allow communication between different kinds of software, hardware, operating systems and heterogeneous networks.

To illustrate the concept, Cerf offers an old-school analogy: postcards.

“A postcard has a ‘to’ and ‘from’ address and some content. But the postcard doesn’t actually know how it’s being carried. Neither does an Internet packet” — a formatted unit of data — “whether it’s being carried by optic fiber, radio link, a satellite channel,” he says.

“The postcard also doesn’t know what it’s carrying, which means the post office doesn’t care, either. That’s also true of packets: The network doesn’t care, and the content is being interpreted at the edges of the network, where computers are.

We knew we didn’t know what new technologies might come along, so we wanted to create something that could work long term on a non-national, global scale.”

“This ‘ignorance’,” Cerf says, “is important.”

But packets are like postcards, and if postcards are all you have to work with, you face certain problems: If you want to send a book to a friend through the postal system, you would have to cut up pages to fit on a postcard. They might get out of order, some might not arrive, your friend’s mailbox might hold fewer postcards than you send, and you wouldn’t know he received them until he sent you a postcard back, which might itself be lost.

The TCP/IP protocol is essentially a method of flow and error correction control that circumvents such problems. It was created, Cerf says, to be “ignorant” so that it can “run on top of everything else,” which means software developers can adapt any new communication technology to mesh with the Internet. In essence, it allows divergent computer technology and networks to operate as if they are all part of a common network.

Because of that flexibility, the Internet, launched on Jan. 1, 1983, triumphed over numerous other networking approaches created in the 1970s and ‘80s.

“We wanted to future-proof the protocol,” Cerf says. “We knew we didn’t know what new technologies might come along, so we wanted to create something that could work long term on a non-national, global scale.”

TCP/IP has allowed the Internet to grow organically, with little centralized oversight except for such things as the issuance of unique domain names and allocation of unique numerical Internet addresses.

Cerf and Kahn also advocated to ensure that the public would have access to the Internet. By the late 1980s, U.S. government agencies had created numerous “backbone” networks for electronic communication, but none allowed public use.

“Why let the unwashed public into our playpen?” was the prevailing sentiment, Cerf says. “I realized that if we didn’t do something, the public was never going to get access to it. But there was no commercial traffic allowed on government-sponsored backbones. I wondered, ‘How do I break that rule?’”

Kahn by that time had founded the Corporation for National Research Initiatives and Cerf was working for MCI Communications. They asked the Federal Networking Council for permission to connect MCI’s private electronic mail system, MCI Mail, to the Internet mail system, just to see if it could be done. The agency reluctantly agreed, but limited the experiment to one year.

As soon as they connected the two systems and traffic began to flow between MCI and the Internet, other private email service companies recognized the potential and went to the networking council to demand they be allowed to do the same. Within a year, the first commercial internet service providers were doing business with the public.

“We said it was a test. But the true purpose was to break the barrier against commercial traffic. I just thought the public would benefit from the Internet,” Cerf says, perhaps understating the case.

When: 7:30 p.m. Saturday, Feb. 10

Where: Macky Auditorium, University of Colorado Boulder campus

Info: www.colorado.edu/physics/gamow or call 303-492-6952

Tickets: Free and open to the public

Decades on, Cerf and Kahn’s innovations allow some four billion people around the world use the Internet, which has so far proved “future proof.” But there are issues that concern Cerf, including recent moves by the Federal Communications Commission to undermine “net neutrality,” the principle that service providers must treat all data on the internet the with the same set of rules and not discriminate or create differential pricing to customers based on content, platform, destination or any other factor.

In December, the FCC eliminated net neutrality in a rather arcane shift, by moving regulation of the Internet from one framework — which was created for telecommunications, not the Internet — to another, unregulated scheme.

A side effect of the move, Cerf says, is that the Internet now has been deregulated and there is “no barrier to potentially abusive behavior by current” Internet service providers, who could, theoretically, limit or make very expensive customers’ access to some content. Cerf opposed the move, but acknowledges that advocates of the change argue that the previous framework opened the door to potential regulatory overreach by some future “rogue FCC.”

“I’m very disappointed by the tack taken by the current FCC,” he says. “My preferred outcome would have been to leave the (previous regulatory framework) in place until Congress could pass new legislation specific to the Internet.”

A member of the National Science Board and National Academy of Engineering, Cerf is also concerned about the promotion of “alternative facts” and what he sees as a decline in critical thinking among many Americans.

“If we are going to do anything useful for future generations, we have to start teaching both children and adults to think critically about information, regardless of the source,” he says. “But that takes work and I confess that I’m afraid American society tends to be a little lazy when it comes to intellectual thinking.”

Vint Cerf also will give a free, public talk at Silicon Flatirons’ “Regulating Computing and Code” conference at 8:45 a.m. Sunday, Feb. 11, Wolf Law Building, CU Boulder campus. Info: http://bit.ly/2BT4HTO