Research Projects

Developing Principles for Effective Human Collaboration with Free-Flying Robots

Aerial robots hold great promise in supporting human activities during space missions and terrestrial operations. For example, free-flying robots may automate environmental data collection, serve as maneuverable remote monitoring platforms, and effectively explore and map new environments. Such activities will depend on seamless integration of human-robot systems. The objective of this research is to advance fundamental knowledge of human-robot interaction (HRI) principles for aerial robots by developing new methods for communicating robot status at a glance as well as robot interface technologies that support both proximal and distal operation. This work will design a signaling module that maps robot communicative goals to signaling mechanisms, which can be used in robot software architectures in a manner analogous to common robot motion planning modules. Additionally, this work will examine how robot interface design requirements differ for crew and ground control and develop techniques for scaling control systems across interaction distances.

Investigators: Daniel Szafir

Sponsor: NASA Early Career Faculty Award

Coordinated Persistent Airborne Information Gathering: Cloud Robotics in the Clouds

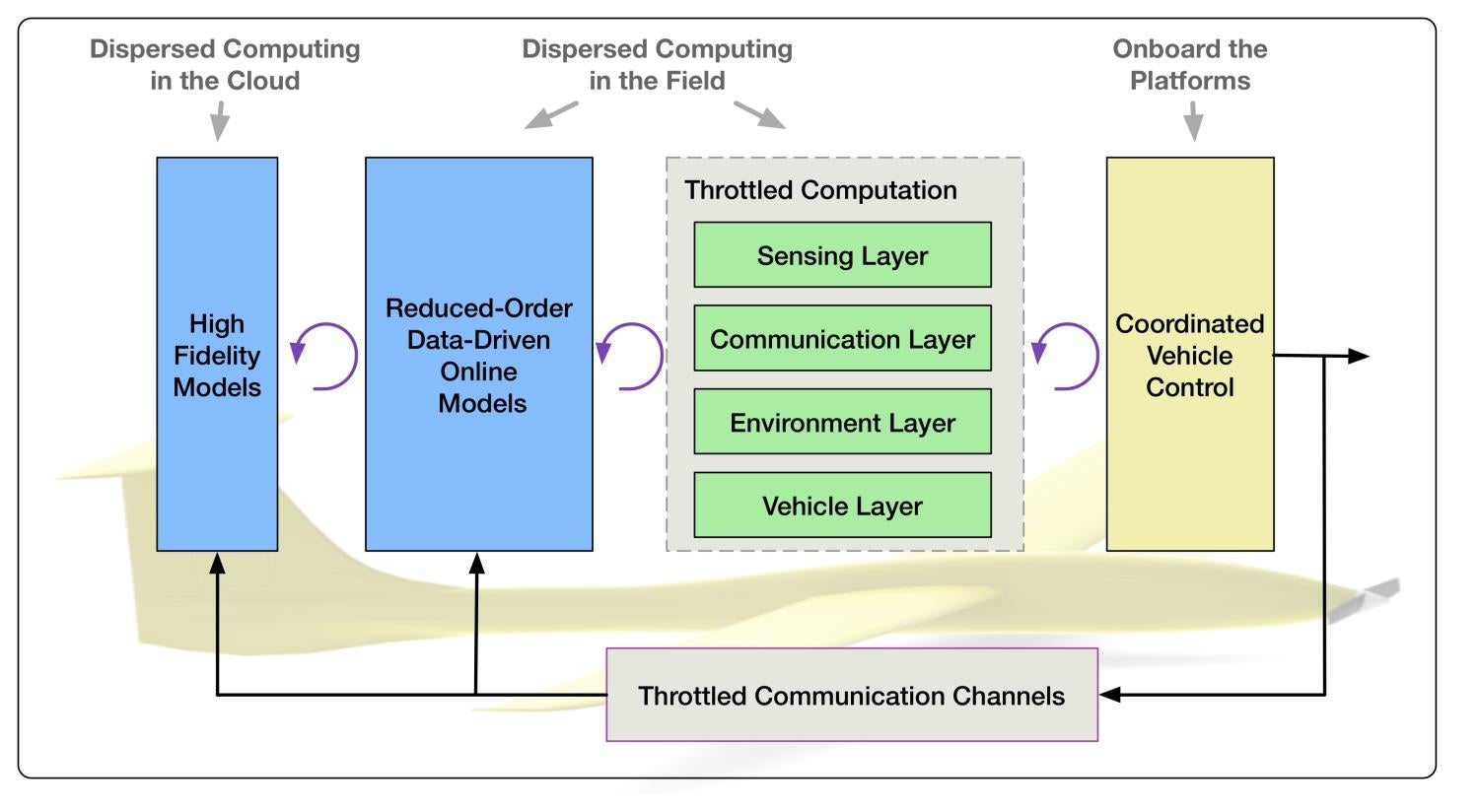

This project will investigate coordinated energy-aware information-gathering by dynamic, data-driven reasoning across four inter-related layers: the sensing layer that focuses on information-theoretic formulations of mission tasks; the communication layer that learns models of net- worked communication behavior for improved coordination in denied or stressed environments; the environment layer whereby the dynamics of the atmosphere drive aircraft performance; and the platform layer where sensors embedded into the UAS platform provide novel flight performance data.

Autonomous decision-making is enabled by reasoning with complex models that cannot be run onboard the sensing platforms. System performance relies on the integration of high-fidelity offline models with reduced-order low-fidelity models driven by dynamic data collected during mission execution. This project exploits the Dynamic Data-Drive Application System (DDDAS) paradigm by developing autonomous decision-making loops closed over multiple spatial and temporal scales. Global (re)planning algorithms will be integrated with receding horizon trajectory optimization and transfer learning to create a framework that can target observations while balancing solution quality and computational resources. Further, net-centric, cloud-computing software will be created that connects multiple physical systems with computation resources dispersed over wireless communication networks. While the proposed effort includes aspects of all four components of the DDDAS Basic Area Objectives, primary advances are in the areas of Mathematical and Statistical Algorithms and Application Measurement Systems and Methods.

Investigators: Eric Frew (PI), Brian Argrow

Sponsor: AFOSR Dynamic Data-Driven Application Systems Program

Leveraging Implicit Human Cues to Design Effective Behaviors for Collaborative Robots

Robots have the potential to significantly benefit society by actively collaborating with people in critical domains including manufacturing, healthcare, and space exploration. But to provide effective assistance, robots must be able to work with people in a natural, intuitive, and socially adept manner. Current human-robot collaborations require that people explicitly communicate their goals and desired responses to robotic partners. As a result, joint human-robot activities bear little resemblance to scenarios involving human-human teamwork, where people are able to understand their partner's implicit cues, such as eye gaze, facial expressions, and intonations, and intuit appropriate responses, such as moving to a certain location, preemptively fetching a tool, or providing a clarification. The goal of this project is to establish a research program that will explore the design of effective behaviors for collaborative robots by developing computational models that enable them to sense implicit human communicative cues and guide robot responses by inferring cue intent, and to evaluate the effectiveness of the new algorithms in human-robot studies. The research holds significant promise of benefiting society by helping to achieve a vision of robots acting as key contributors, partners, and assistants in human work, with applications across a range of activities including domestic housework, manufacturing, construction, healthcare, and space exploration.

To these ends, the project will address the challenge of designing effective collaborative robots by developing a preliminary framework, process, and set of methods to sense and respond to implicit human communicative behaviors. His approach will involve (1) observing and classifying implicit cues and responses for human-human teams engaged in an archetypical collaborative task, (2) developing computational models of the relationships between goals, cues, and responses using features and parameters extracted from observed behaviors, (3) integrating implicit cue sensing and response algorithms to guide robot behaviors in specific collaborative use cases, and (4) evaluating the effectiveness of these behaviors on collaborative task outcomes. This research will produce a set of generalizable design principles for collaborative robots, generate open-source algorithms showcasing practical implementations, and advance knowledge regarding computational understanding of human behaviors. Overall, the work will lead to robots that are able to work more effectively with people and accelerate the integration of assistive robots into society. It will synthesize theories of human communication and explore their application to human-robot interaction, as well as advancing knowledge regarding how robots might provide assistance as human collaborators and the types of sensors necessary for robots working closely with human partners. Implicit sensing and response algorithms that have been empirically validated in HRI experiments will be disseminated as modules for the open-source Robotic Operating System (ROS).

Investigators: Daniel Szafir

Sponsor: NSF CRII

Severe-storm Targeted Observation and Robotic Monitoring (STORM)

This project addresses the development of autonomous self- deploying aerial robotic systems (SDARS) that will enable new in-situ atmospheric science applications through targeted observation. SDARS is comprised of multiple robotic sensors and distributed computing nodes including: 1.) multiple fixed-wing unmanned aircraft, deployable Lagrangian drifters, mobile Doppler radar, mobile command and control stations; 2.) distributed computation nodes in the field and in the lab; 3.) a net-centric middleware connecting the dispersed elements; and 4.) an autonomous decision-making architecture that closes the loop between sensing in the field and new online numerical weather prediction tools. The proposed effort draws on the expertise of the project team in the areas of robotics, unmanned systems, networked control, wireless communication, active sensing, and atmospheric science to realize the vision of bringing cloud robotics to the clouds. Combining autonomous airborne sensors with environmental models dispersed over multiple communication and computation channels enables the collection of information essential for examining the fundamental behavior of atmospheric phenomena.

Autonomous self-deploying aerial robotic systems will close significant capability gaps in conventional platform’s abilities to collect the data necessary to answer a wide range of scientific questions. The motivating application for this work is improvement in the accuracy and lead time of tornado warnings. Other science applications that would benefit from the proposed work include thunderstorm outflows and gust fronts; landfalling hurricane boundary layer dynamics; planetary boundary layer physics studies (including many processes relevant to climate dynamics); atmospheric responses to fires; pollutant dispersion monitoring and forecasting; and terrain-driven circulation systems. Curriculum development, mentoring, and outreach activities will emphasize the complexity of modern engineered systems and their potential societal benefits in order to motivate the next gener- ation of scientists and engineers.

Investigators: Eric Frew (PI), Brian Argrow (CU), Adam Houston (UNL), Chris Weiss (TTU), Volkan Isler (UMN), and Dezhen Song (TAMU);

Sponsor: NSF National Robotics Initiative

UAV Deployed Microbuoys for Arctic Sensing

This project will develop a concept for low-cost, rapidly-deployable, environmentally friendly, unmanned sensor systems, including deployment and data reach-back from above the Arctic Circle that can detect, track and identify surface and subsurface targets. Our effort is based on building upon our experience with the Air Deployed Microbouy (ADMB)sensors from the MIZOPEX campaign. The ADMB have a flexible architecture that allows a range of additional sensors to be added through a variety of interfaces and for this project. Additionally to enable communications 24/7 in the Arctic Ocean, we include and Iridium satellite modem. This project takes an innovative approach that combines the characteristics of acoustic signals from targets, pre-processing to compress information, precise GPS time tagging, low data-rate satellite communication, and feasible set pseudo inversion methods to produce robust target detection and localization solutions over wide areas and at low cost. These developments will enable the creation of a flexible small UAS launched system that will provide Arctic measurements for 30 days or more.

Investigators: Scott Palo and Dale Lawrence;

Sponsor: DARPA

TALAF – A Comprehensive Framework to Develop Refine and Validate Learning Agents

This research will facilitate the creation and refinement of tactical teams of autonomous aerial assets that learn from experience gained in the course of high fidelity live-virtual-constructive (LVC) mission simulations. TALAF (Tactical Autonomy Learning Agent Framework) will be distributed and dynamically configurable across an Internet Protocol (IP) network, allowing multi-actor scenarios. The ‘participants’ will include a mixture of agents employing decision logic to emulate human responses - plus actual humans performing their operational roles on virtualized hardware. Data associated with simulation conditions, situational awareness, actor responses, and Measures of Performance and Effectiveness (MOPs and MOEs) will be collected in the course of scenario progression.

The novel innovation proposed is to pass all relevant data to a Gaussian Process (GP) agent-learning engine that applies nonparametric Bayesian optimization techniques to refine the decision logic (tactics, rules, procedures) employed by various autonomous agents, and update their behaviors to gain improvements in their responses as efficiently as possible. This ‘virtual training’ of agents can be conducted by multiple learning engines, each with its own filtering of the world view. This will allow different assets to be automatically trained in the context of their own capabilities and information processes. The role of an agent in a deployed context need not be exclusive operation of an asset: the agent may serve as an “advisor” to the human operator – anticipating the probable actions of adversaries and providing real-time tips and guidance to provide a tactical advantage. This work will ultimately allow for human-machine blending that capitalizes on the strengths of each – arriving at a unified capability that is far superior to either one standing alone.

Investigators: Nisar Ahmed;

Sponsor: Orbit Logic (AFRL STTR)

NSF Center for Unmanned Aircraft Systems

The University of Colorado is a founding site of an Industry/University Cooperative Research Center for Unmanned Aircraft Systems to address the issues common to the UAS industry that limit widespread application across military, civil, and commercial domains. The purpose of the center is to provide innovative solutions to key technical challenges and superb training for future leaders in the unmanned aircraft systems industry. A core strength of the CUAS research teams is our extensive experience in conducting flight test operations of small unmanned aircraft systems. Researchers in the center perform flight tests under FAA-approved Certificates of Authorization (COA) and in FAA-designated restricted airspace and have access to state-of-the art university labs and facilities.

CUAS projects being carried out at CU include: Information and Distributed Optimization; Harnessing Human Perception in UAS via Bayesian Active Sensing; Emerging sUAS In-Situ Sensing Technology; Assured Autonomy; Guidance and Control for a UAS Providing Communication Services.

Investigators: Eric Frew (PI), Nisar Ahmed, Brian Argrow, Tim Brown, Dale Lawrence, and Jason Marden;

Sponsor: NSF Industry/University Cooperative Research Center (IUCRC)

The Unmanned Aircraft System and Severe Storms Research Group

The Unmanned Aircraft System (UAS) and Severe Storms Research Group (USSRG) is a consortium of public and private sector collaborators led by the University of Nebraska–Lincoln and the University of Colorado Boulder. USSRG aims to bring together collaborators from universities, federal laboratories, the private sector, and others who share a vision of bringing UAS to bear on the study of severe storms.

The collaborative team leading USSRG is composed of the University of Colorado Boulder (UCB, Dr. Brian Argrow) and the University of Nebraska–Lincoln (UNL, Dr. Adam Houston). For nearly a decade, the UCB–UNL team has developed solutions to the scientific and technological challenges involved in using UAS to study severe storms. Their successes have included:

- the first direct sampling of a terrestrial mesoscale phenomenon in the US as part of the Collaborative Colorado-Nebraska UAS Experiment (CoCoNUE) in 2009

- the first intercept of a supercell thunderstorm by a UAS in May 2010 during the second Verification of the Origins of Rotation in Tornadoes Experiment (VORTEX2)

- the first ever sampling of a supercell rear flank internal surge by a UAS in June 2010 during VORTEX2

- the first simultaneous multi-UAS direct sampling of a thunderstorm outflow during the Multi-sUAS Evaluation of Techniques for Measurement of Atmospheric Properties (MET-MAP) experiment in 2014

Efficient Reconfigurable Cockpit Design and Fleet Operations using Software Intensive, Networked and Wireless Enabled Architecture (ECON)

The purpose of this project is to investigate the utility of software-intensive, networked, wireless, reconfigurable cockpit design for safe and efficient fleet operations. Network-enabled, cloud-based services have the ability to improve aircraft flight operations and fleet management. The cloud-based services and architecture will increase operational consistency, reduce need for software and some sub-systems to reside in every aircraft, and enable optimization across entire fleet. However, the use of cloud-based services relies on secure network communication in order to ensure the timely flow of information to dispersed decision-making elements, to ensure the veracity of data sources in the network, and to ensure the safety and validity of plans generated off-board the individual aircraft that ultimately act on the cloud-based decision. As a result, quality of service and cyber-security of the supporting network infrastructure are of major concern. A large collaborative team is being led by the NASA Ames Research Center. We will make specific contributions in the area of cybersecurity for networked operations.

Investigators: Eric Frew (PI) and Eric Keller

Sponsor: NASA Learn

Esonde Lightning Detection Instrument

The Jonathan Merage Foundation (JMF) has a standing history of partnering with the University of Colorado Boulder’s College of Engineering and Applied Science. In 2010, the JMF provided seed funding to develop a mobile control station for the Tempest UAS to operate in the VORTEX2 field campaign, the largest experiment ever deployed to study tornadoes. In 2014, the foundation committed an additional gift that has allowed RECUV to procure a tracker vehicle and to work with researchers at New Mexico Tech to develop a new lightning detection instrument to be integrated into the Tempest UAS and its successor the TTwistor UAS. The lightning detection system is being designed to measure electric field changes associated with cloud-to-ground lightning strikes.

Investigators: Brian Argrow

Sponsor: Jonathan Merage Foundation

Evaluation of Routine Atmospheric Sounding Measurements using Unmanned Systems (ERASMUS)

The use of Unmanned Aircraft Systems (UAS) is becoming increasingly popular for a variety of applications. One way in which these systems can provide revolutionary scientific information is through the routine measurement of atmospheric conditions, particularly properties related to clouds, aerosols, and radiation. Improved understanding of these topics at high latitudes, in particular has become very relevant due to observed decreases in ice and snow in Polar Regions. ERASMUS is a field campaign recently funded by the United States Department of Energy (US DOE) that will feature the use of unmanned aircraft systems (UAS) to obtain atmospheric measurements over the DOE Atmospheric Radiation Measurement (ARM) program’s Oliktok Point facility.

Investigators: Dale Lawrence, Brian Argrow, Scott Palo, Jim Maslanik, Gijs deBoer (NOAA)

Sponsor: Department of Energy

Persistent Information-Gathering with Airborne Surveillance and Communication Networks in Fading Environments

The purpose of this collaborative effort is to develop and evaluate a hierarchical framework for real-time, path planning for persistent information-gathering tasks in fading communication environments. Novel features of the proposed work are learning the radio environment and background winds; consideration of the energy that can be gained from wind field patterns (energetics); and a persistent, communication-aware information-based formulation of sensing tasks (informatics) that can integrate multiple sensing modalities.

Investigators: Eric Frew

Collaborator: Han-Lim Choi, Korean Advanced Institute for Science and Technology (KAIST)

Atmospheric Profiles and Clouds over the Chukchi Sea

Development of a folding wing UAS for high-speed air deployment from a Coast Guard C-130, and infrared cloud margin sensors. System will be tested off the north shore in Alaska.

Investigators: Dale Lawrence and Jim Maslanik

Sponsor: Office of Naval Research

Quantifying Kelvin-Helmholtz Turbulence Processes

Thegoal of this project is to collect in-situ turbulence measurements using the DataHawk, and compare them with with 50 MHz radar returns in Jicamarca, Peru.

Investigators: Dale Lawrence, Ron Woodman (IG Peru)

Sponsor: National Science Foundation